In Short: The Narrative Is Wrong

The dominant narrative in 2026 is that AI is making junior data engineers obsolete. Most consultancy leaders nod along publicly while quietly questioning their grad programmes in private. The narrative is wrong, and the consultancies acting on it are setting themselves up for a capability crisis in three to five years.

The role AI is actually making obsolete is not junior data engineering. It is rote mid-level data engineering, performed at a senior price tag, by people who never developed judgement. The conflation of these two things is the most expensive mistake in our industry right now.

This article is a long-form version of a position we have taken internally at Solv. Other firms are welcome to disagree.

The Conventional Wisdom, Stated Plainly

The argument that AI makes junior data engineers obsolete runs like this. AI coding assistants now produce working pipelines, working notebooks, working SQL, and working Power BI models on the first try. The mechanical work that used to consume a junior's first two years has collapsed. A senior engineer with a Copilot subscription can do the work of a junior in a fraction of the time. Therefore, the junior is redundant. Therefore, stop hiring them. Therefore, restructure the team toward more seniors and fewer juniors. Therefore, cut the grad programme.

This is the slide deck at every consultancy partners' meeting in 2026. It is internally coherent. It is also wrong, and the firms acting on it are confusing a short-term cost optimisation for a long-term strategy.

What AI Actually Does to Data Engineering

The shift AI causes is real. The shape of that shift is not what the conventional wisdom describes.

AI raises the floor on the mechanical parts of data engineering. Generating boilerplate. Translating between SQL dialects. Writing pagination logic. Producing first-pass transformations. Building scaffolding for a new pipeline. These tasks used to take meaningful time. They now take minutes. The floor of what any data engineer can produce, regardless of experience, has gone up significantly.

The ceiling has gone up too, and this is the part the conventional wisdom misses. The hard problems in data engineering were never the mechanical ones. They were the modelling decisions, the conformity questions, the late-binding versus early-binding trade-offs, the question of which grain a fact table should live at, the choice between SCD Type 2 and a temporal pattern, the decision about which conformed dimension should govern which mart. AI does not solve these problems. AI confidently produces solutions that are subtly wrong, and the only person who can catch them is someone who already understands what right looks like.

A senior engineer prompting an AI to fix a poorly-modelled star schema gets a star schema that runs without errors and answers the wrong questions correctly. The technical floor has risen. The judgement ceiling has risen with it. The gap between the two is exactly where data engineering still happens.

The Role That Is Actually Obsolete

The mid-level data engineer who stopped developing judgement five years ago is the role that should worry.

This is the engineer who can write functional pipelines from a clear specification, who knows the platform-specific syntax, who can follow established patterns, but who does not design data models from first principles, who does not push back on bad requirements, who does not catch the conceptual mistake before it becomes a year of remediation work. In 2020, this engineer was valuable because they were faster than a junior and cheaper than a senior. In 2026, they are slower than an AI agent and more expensive than the value they add.

This is the layer AI compresses. Not the junior who is still learning. Not the senior who provides judgement. The mid-level coaster, whose cost-to-output ratio has fundamentally changed.

Confusing this layer with junior data engineering is the central error in the current discourse. They look similar from the outside because both involve writing pipelines. They are entirely different things. The junior is on the curve. The mid-level coaster is at the top of a curve they stopped climbing. The first is an investment. The second is a sunk cost the industry has been carrying for years and is now ready to write down.

Why Juniors Are More Valuable Than Ever

The case for juniors in 2026 is stronger than it was in 2020, not weaker.

1. Juniors are the only path to future seniors. Senior judgement is not a credential. It is built over years of being wrong, being corrected, being uncomfortable, being given progressively harder problems, and developing the pattern recognition that only comes from extensive exposure to real systems. There is no shortcut. There is no AI that gives a junior senior judgement. A firm that cuts its grad programme in 2026 has, mechanically, no senior bench in 2031. The capability problem is delayed, not avoided.

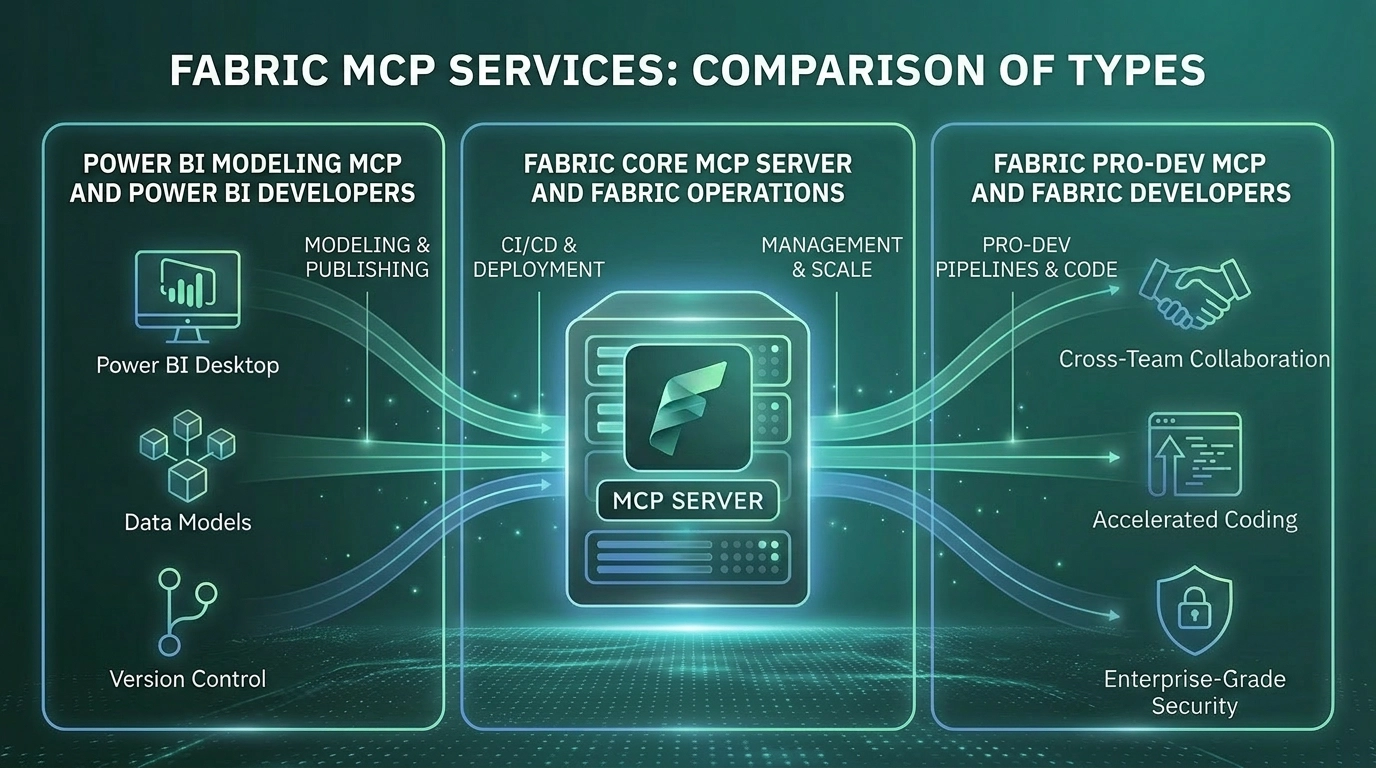

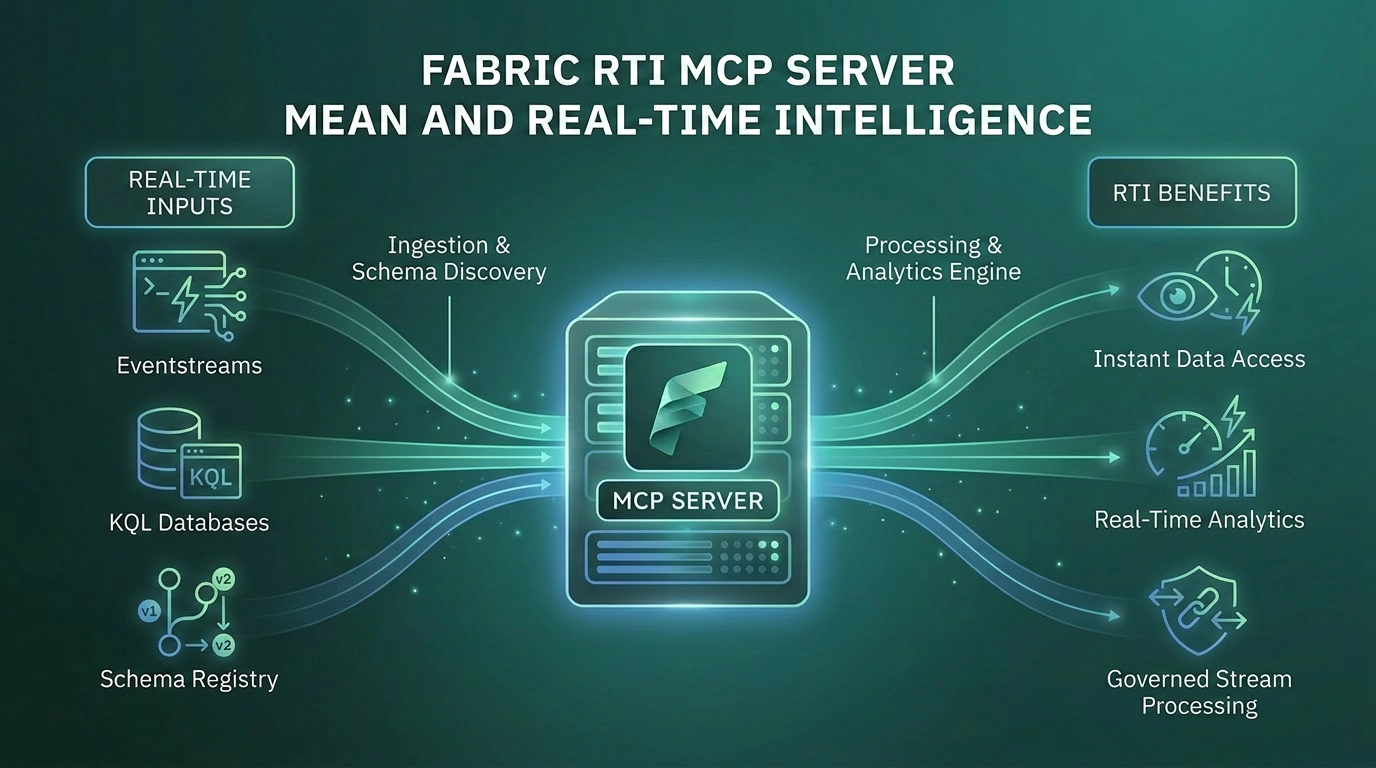

2. AI tooling accelerates junior ramp-up. A junior in 2026 with Pro-Dev MCP, Power BI Modeling MCP, and a thoughtful mentor can produce output that took a junior in 2020 three years to reach. The investment in juniors compounds faster now, not slower. The right move is to invest more heavily, not less.

3. Juniors catch the things seniors miss. Seniors who built their judgement before AI tooling existed have ingrained habits that AI has changed. They write code the way they always have, sometimes ignoring better tools. Juniors who grew up with these tools use them more naturally. In a well-functioning team, this is a strength. The senior provides modelling judgement. The junior provides tooling fluency. The combination is sharper than either alone.

4. The work that requires real judgement is growing. AI compresses the mechanical layer. It does not compress the volume of business problems that need data infrastructure. Demand for data engineering capability is rising. The supply of senior judgement is not, and cannot be conjured. Firms with strong grad programmes are the only firms with a credible pipeline for that demand.

5. Junior data engineers do most of the data quality work. The unglamorous, painstaking, attention-to-detail work of validating that the numbers are right, that the joins behave correctly, that the historical data still reconciles, that the new pipeline produces the same results as the old one. AI does not do this work. The agent generates plausible code. Someone has to verify the numbers. That someone is usually a junior. Cut the junior and the verification stops happening. The agent's mistakes ship to production.

The Dishonest Middle Position

Most consultancies will not say junior data engineering is dead. The reputational risk is too high. The recruiting damage is too obvious. The client signal it would send about long-term capability is too negative.

Instead, they say "we still value juniors" in public while doing three things in private. They reduce grad intake by half. They reframe the remaining juniors as "AI-augmented analysts" or some other made-up title that lets them count fewer engineers in the headcount. They quietly increase the bar for junior hires to a level that filters out the candidates who would have made the best long-term engineers, because the firm now demands that day-one junior produce senior-level output.

This is the dishonest middle. The firms running this playbook are doing the wrong thing while telling themselves they are doing the right thing. They are optimising for a margin number this year and creating a capability gap next year.

The honest version of this conversation is that the role profile of data engineering is genuinely changing. The mechanical work is gone. The judgement work is more valuable. The mid-level coaster is exposed. The junior is more valuable than ever, provided the firm invests in their development with the same seriousness it would have a decade ago. What is dying is not a career stage. It is a way of working that did not require judgement and could be defended only by being cheap. AI has removed the cheap defence.

What This Means for Consultancies

Three concrete things follow from the position above.

1. Keep your grad programme. Improve it. The argument against the grad programme is short-sighted. The argument for it is that it is the only mechanism that produces the seniors of the future, and that argument has not changed. What has changed is what a junior should be doing. Less rote work. More exposure to modelling decisions. More structured mentorship from seniors with judgement. More reading and analysis of AI-generated code, not less. Cutting it entirely is the worst possible response.

2. Cull the dead wood, not the new wood. The honest restructuring decision is harder than the easy one. The firms that take this seriously will look at their mid-level layer carefully and ask which engineers have actually developed judgement and which have plateaued. The ones who have plateaued are the ones who should worry, not the new graduates. This is uncomfortable. It is also accurate.

3. Pay seniors what they are worth. The compression of mid-level value should mean that seniors with real judgement see their compensation rise, not fall. Firms that try to hold senior salaries flat while extracting more value from them will lose those seniors to firms that pay for what judgement is worth. The labour market for senior data engineering judgement in 2026 is tighter, not looser.

What This Means for Clients

For clients evaluating consultancies, two questions distinguish the firms that have thought about this honestly from the firms that have not.

The first question is straightforward: "What does your grad programme look like, and how has it changed in the last two years?" Firms that cut grad intake in half and have no defensible explanation are signalling that they are optimising for the current margin at the expense of future capability. Firms that have invested in their grad programme, with a clear position on what juniors should and should not be doing in an AI-augmented environment, are signalling that they intend to be a serious capability provider in five years.

The second question is harder: "Show me an AI-generated artefact your team caught a meaningful mistake in. What was the mistake, and who caught it?" Firms that cannot produce a specific example are firms whose people are accepting AI output uncritically. The mistake might not have been caught yet. It will be, eventually, by the client.

Where Solv Sits

At Solv, we have taken the position above seriously enough to act on it.

We continue to invest in junior data engineering capability. We have changed what we expect from juniors. Less rote pipeline work. More modelling exposure, more domain learning, more structured review of AI-generated artefacts, more time spent thinking about why a particular pattern fits a particular problem.

We are clear-eyed about the mid-level question. We do not carry coasters at any level, and we have not for some time. The compression AI creates in that layer is a force we already account for in how we hire and develop.

We pay our seniors what their judgement is worth. The market is doing this whether firms participate in it or not. We participate in it.

We do not pretend this is the only viable model. Firms that take the opposite view will produce their own results and the market will sort it out over the next five years. What we are unwilling to do is take the dishonest middle position, where the public message is "we value juniors" and the private behaviour is cutting them.