In Short: What Does It Mean to Expose a Fabric Data Agent as an MCP Server?

A published Fabric Data Agent can now act as a Model Context Protocol server. External AI systems - including Microsoft Foundry agents, Copilot Studio agents, custom MCP clients, and VS Code in agent mode - can call it as a tool through the standard MCP protocol. The agent exposes a single tool, which is the agent itself, and other AI systems consume that tool to ask questions of your governed enterprise data in OneLake.

This sounds like a small configuration setting. It is not. It is the change that turns a Fabric Data Agent from a destination users visit inside Fabric into a building block any AI system in your organisation can compose with.

The capability is in public preview, introduced in the November 2025 Fabric release and expanded since.

What Problem Was Microsoft Trying to Solve?

The earlier wave of Fabric Data Agent adoption ran into a specific structural problem. Once a team had built a useful agent - with good source descriptions, well-curated example queries, and a tested instruction set - that work was locked inside Fabric. Users had to come to Fabric to use it. The agent could not participate in workflows that lived elsewhere.

In an enterprise running Microsoft 365 Copilot, Copilot Studio, Microsoft Foundry, and various custom AI applications, this created an obvious tension. The data agent represented the canonical, governed way to ask questions of the organisation's data. Every other AI surface either rebuilt that capability poorly or operated without it. The investment did not compound across the AI estate.

The MCP server capability addresses this directly. A published data agent now exposes itself as a tool that any MCP-compatible system can call. The orchestrating agent in Copilot Studio asks the Fabric Data Agent the data question, gets a governed answer, and combines it with whatever else it is doing. The data agent becomes a service rather than a destination.

The deeper change is that "the right way to ask data questions" stops being a destination users navigate to and starts being a capability the rest of the AI stack consumes automatically.

How Does Exposing a Data Agent as an MCP Server Work?

The mechanics are simpler than the strategic implications.

A Fabric Data Agent published in your tenant has a Model Context Protocol tab in its Settings. That tab exposes an MCP server URL. You can also download a pre-configured mcp.json file from the same tab to drop directly into a VS Code workspace or another MCP client.

The agent appears in the MCP world as a single tool - not five tools, one per data source, not twenty tools, one per table. One tool, which is the agent itself. This is a deliberate design choice and a meaningful one.

The description of that single tool is the description you wrote when you published the data agent. The orchestrating AI in Copilot Studio, Foundry, or VS Code reads that description to decide when to invoke your data agent versus another tool it has available. A vague description gives the orchestrator no signal. A specific one - "answers questions about retail sales by store, day, and SKU; covers inventory levels, returns, and promotional uplift" - lets the orchestrator route correctly.

This is the most important practical detail in the entire feature. The description is no longer just a label. It is the routing logic.

Authentication runs through Microsoft Entra ID. End users invoking the agent through an external system still operate under their own identity. Row-Level Security, Column-Level Security, and Microsoft Purview policies continue to apply. The integration does not weaken the security boundary.

What Changes for AI Architecture?

The shift in how organisations should think about AI architecture is bigger than it first appears, and it falls into five concrete changes.

- Build once, consume everywhere - The investment in a well-configured data agent - instructions, source descriptions, example queries, tested prompts - now compounds across every AI consumption path. The same agent answers questions in Fabric, in M365 Copilot, in a Copilot Studio agent for your sales team, in a Foundry-hosted agent embedded in a customer service application, and in a developer's VS Code session. The work is not duplicated. The governance is not duplicated.

- The data agent description becomes critical infrastructure - In a single-destination world, the description was a label. In an MCP-exposed world, it is the routing logic. Orchestrating agents read it to decide whether to call your agent for a given user question. Time spent making the description specific, scoped, and truthful is now time spent on the equivalent of an API contract.

- Multi-agent workflows become realistic - A Copilot Studio agent for sales can call the Fabric Data Agent for "what was the conversion rate by region last quarter", call a CRM tool for "what deals are in flight", call an email tool to draft a follow-up, and present the result as a single answer. With Data Agents as MCP servers, this becomes practical because the data layer integrates the same way every other tool does.

- Governance survives the integration - Because the data agent enforces RLS, CLS, and Purview at query time, the integration does not create a new governance gap. A sales user calling the agent through Copilot Studio sees the data they would see in Power BI. A finance user calling the same agent sees the data they would see in a Warehouse query. The security boundary is the user's identity, not the consuming system.

- The destination experience becomes one of many - Users in Fabric continue to chat with the data agent as before. Users in Teams, Outlook, Copilot Studio agents, and custom applications now consume the same agent without leaving their tool. For many user populations, the destination chat experience inside Fabric becomes optional.

Where Does It Fit in the Broader Fabric MCP Story?

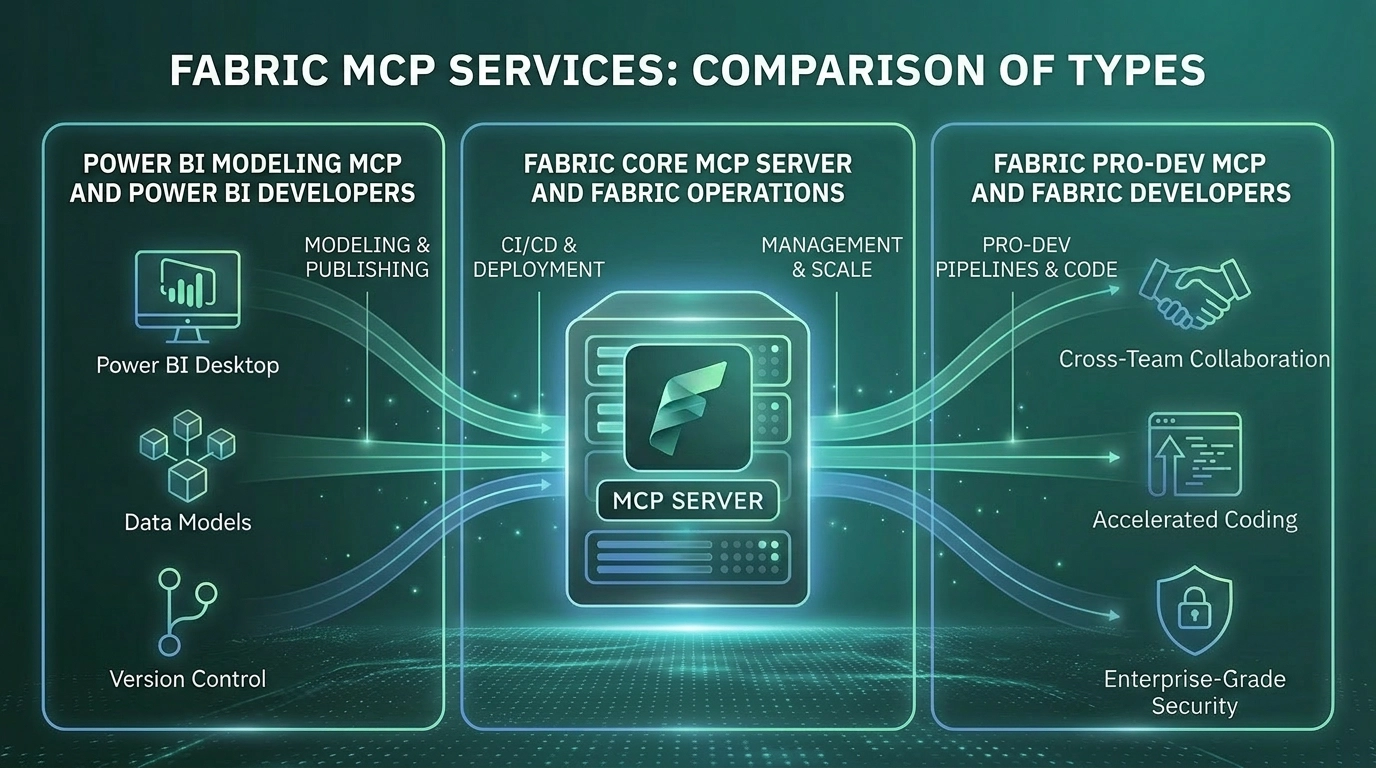

Microsoft has several MCP server families across the Fabric and Power BI surface. The cleanest mental model:

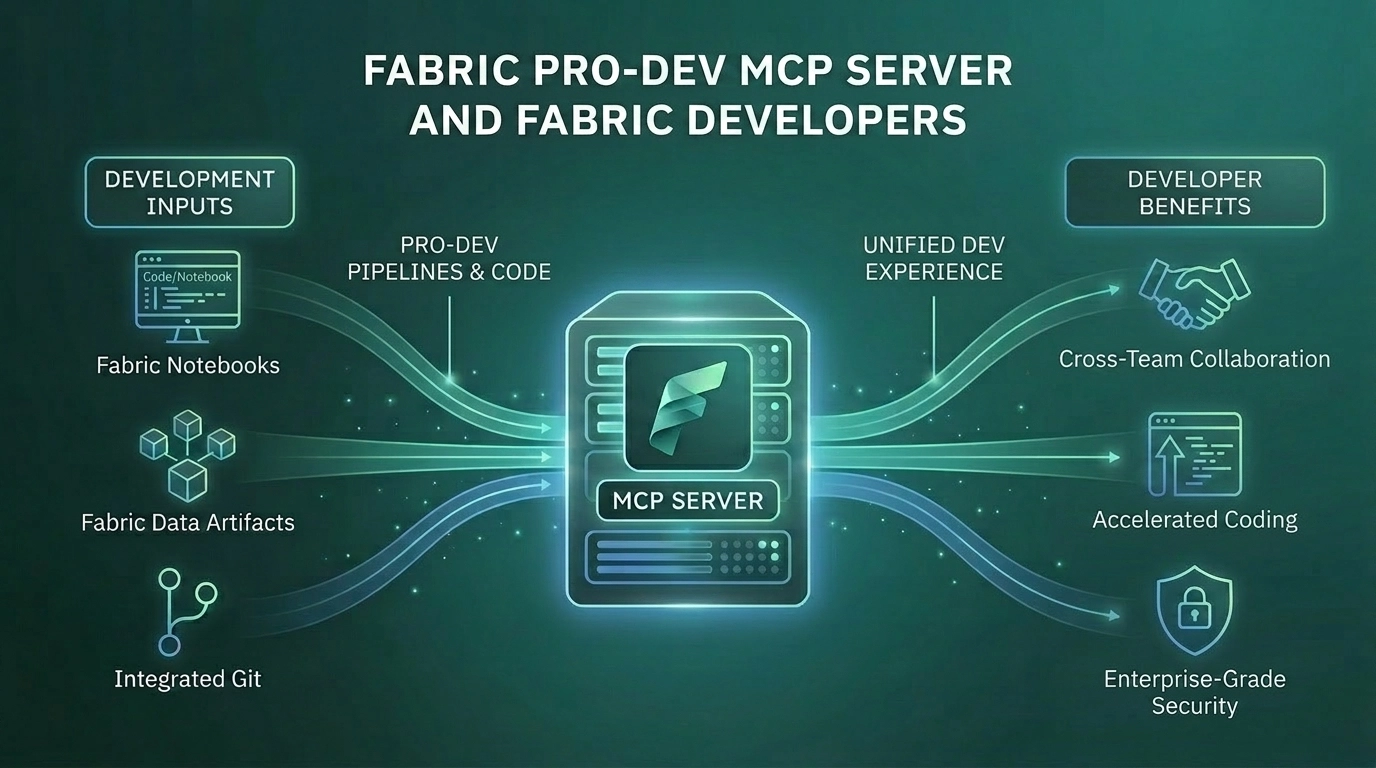

- Fabric Pro-Dev and Core MCP Servers - Expose the platform itself. API documentation, item creation, workspace management, and operations. What AI agents call when they need to operate on Fabric.

- Power BI Modeling and Remote MCP Servers - Expose Power BI-specific capabilities. The Modeling server builds and modifies semantic models. The Remote server queries published models.

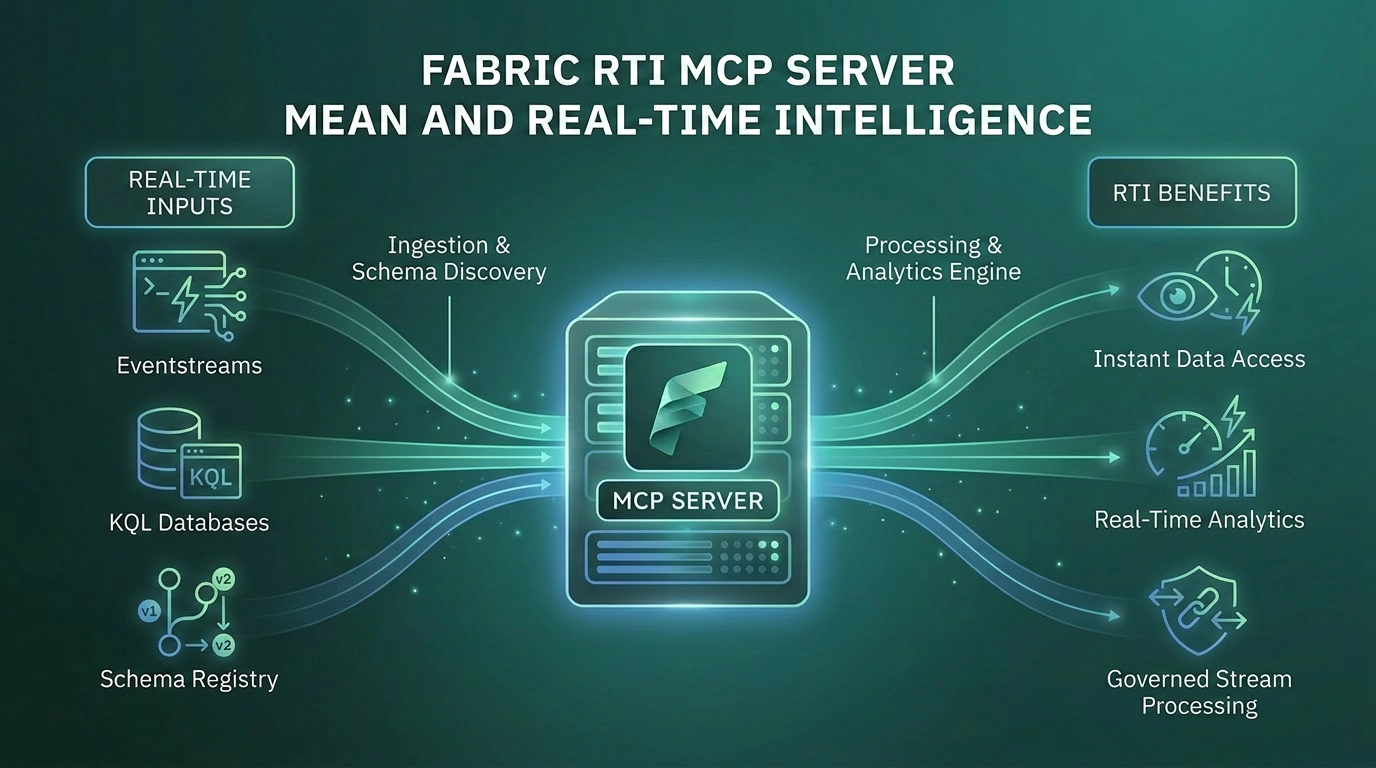

- Fabric RTI MCP Server - Exposes Real-Time Intelligence capabilities, including Eventhouse queries and Activator triggers.

- Data Agent as MCP Server - Different from all of the above. It does not expose a platform capability. It exposes a curated, governed, opinionated answer to a category of business questions, packaged as a single tool.

A serious agentic application in Foundry or Copilot Studio will often combine several of these. The Pro-Dev server for context. The Core server for operations. One or more Data Agent MCP servers for data answers. The Data Agent as MCP server is the piece that makes that combination usable, because it is the only one that returns governed, business-ready answers rather than raw platform operations.

The Strategic Point Most Organisations Miss

The Data Agent as MCP Server is not a feature. It is a redefinition of what a Fabric Data Agent is.

Most organisations that have built data agents so far have built them as chat experiences. Users go to Fabric, ask a question, get an answer. The architectural model is "data agent equals destination". The MCP capability invalidates this model.

The new model is that a data agent is a reusable capability the entire AI estate can compose with. The same governed answer engine sits behind every AI surface in the organisation. The investment in instructions, source descriptions, and example queries no longer pays back in one product. It pays back wherever AI touches data in the business.

Organisations that miss this point continue to build separate data integration logic into every Copilot Studio agent, every custom application, every Foundry workflow. The work fragments and the governance fragments with it. Organisations that adopt the new model invest deeply in one data agent per business domain, expose each as an MCP server, and consume them everywhere.

This is the difference between treating MCP as a feature and treating MCP as the integration architecture. For organisations serious about agentic AI on the Microsoft stack, the second framing is the only one that scales.

Who Will Get the Most From Data Agents as MCP Servers?

This is most relevant for organisations that:

- Already operate, or plan to operate, multiple AI surfaces - M365 Copilot, Copilot Studio agents, Foundry agents, custom applications - that need access to enterprise data

- Have invested, or are willing to invest, in well-curated Fabric Data Agents with good instructions and source descriptions

- Want governance and identity to remain consistent across all AI consumption paths, not redesigned for each one

- Are building multi-agent workflows where data answers are one component among several

- Have the engineering discipline to treat data agent descriptions as API contracts, including versioning and review

Organisations with a single AI surface, or with no governed Fabric Data Agents yet built, should start with the agent itself. The MCP capability multiplies the value of an agent that exists and works well. It does not create that value from scratch.

Why Work With Solv Systems on Data Agents as MCP Servers?

At Solv Systems, we help organisations turn well-built Fabric Data Agents into reusable enterprise capabilities consumed across the AI estate.

Agent Design Before Exposure

We make sure the data agent itself is built well - with the right sources, instructions, and example queries - before it gets exposed to other systems. A poorly built agent exposed via MCP is a fast way to surface the same wrong answers across more places.

Descriptions That Route Correctly

We help your team write the agent descriptions that orchestrating systems use to make routing decisions. This is the underrated part of the work and the part that determines whether the integration is useful or invisible.

Multi-Agent Workflow Design

We design the orchestration patterns that combine your data agents, Copilot Studio agents, Foundry agents, and the rest of your AI estate into workflows that actually deliver value. The composition is the work, not just the connections.

Governance Across the Estate

We integrate Data Agent MCP exposure into your existing Entra, Purview, and Fabric governance framework, so the security boundary travels with the agent regardless of which AI surface is consuming it.