In Short: Fabric Wins the Mid-Market

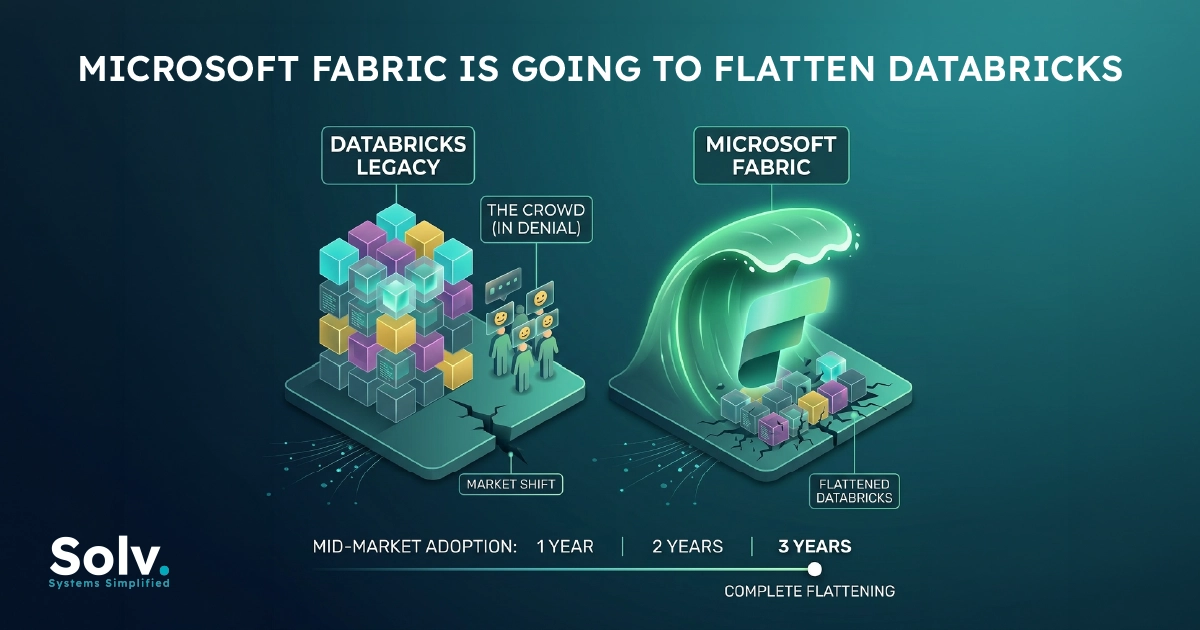

Microsoft Fabric is going to flatten Databricks in the mid-market within three years. The Databricks crowd is in denial about it, and most of their public arguments are answering an enterprise-scale question while the mid-market is making a completely different one.

This is the opposite of a popular take in data platform circles. Databricks loyalists are religious about the product. We have implemented both, charged clients for both, and watched real buying decisions get made over the last two years. The pattern is clear enough to write down.

The Databricks Case, Stated Honestly

The strongest arguments for Databricks deserve a fair hearing before they get pushed back on.

Databricks is more mature. The platform has had a decade of compounding investment in Spark, Delta Lake, Photon performance, MLflow, and Unity Catalog. The engineering bench at Databricks is deep. The product reflects that.

Databricks is genuinely best-in-class for heavy machine learning workloads. The integration of MLflow, the model registry, the serving infrastructure, the GPU support, and the way notebooks, pipelines, and model deployment fit together is something Fabric does not match today.

Databricks is multi-cloud by design. If your strategy explicitly requires cloud independence, or your data sits across AWS, Azure, and GCP, Databricks has a much cleaner story than Fabric does.

Databricks is Spark-native, and that matters for organisations with deep Spark expertise on staff. The optimisations, the Delta Lake transactional guarantees, the streaming patterns, and SQL Warehouse performance: an organisation that has invested in Spark people gets compounding value from Databricks they would not get from Fabric.

These arguments are true. They are also mostly irrelevant to the mid-market buying decision, and that is the part the Databricks crowd refuses to engage with.

Why Those Arguments Do Not Hold in the Mid-Market

The mid-market is not a smaller version of the enterprise. It is a structurally different buyer with structurally different constraints.

A mid-market company in 2026 typically looks like 100 to 1,000 employees, 1TB to 50TB of operational data, a small data team of two to ten people, and a deeply embedded Microsoft 365 estate that already costs real money. The buying decision is being made by a CIO or head of data balancing a finite budget against an immediate need to ship value, not engineering elegance.

Platform maturity. Mid-market workloads do not stress a modern data platform the way enterprise workloads do. Fabric being slightly less mature than Databricks is invisible at the volumes mid-market clients actually run.

ML excellence. Most mid-market companies do not have meaningful ML workloads. They have reporting workloads, transformation pipelines, and the occasional regression model. Databricks' superior ML infrastructure is a Ferrari being sold to someone who needs a Toyota.

Multi-cloud. Mid-market companies are almost never multi-cloud. The cloud independence argument solves a problem they do not have.

Spark expertise. The mid-market does not have it. The market for senior Spark engineers is tight and expensive, and mid-market companies cannot win that hiring fight. They have Power BI developers, SQL analysts, and one or two generalist data engineers. Buying a platform that requires expertise the organisation cannot afford is not a sound decision.

The Databricks crowd's response is usually "Databricks is becoming easier to use, SQL-first, you do not need Spark expertise anymore". That is partially true. It also concedes the central point. If the product is racing to abstract away the Spark layer because mid-market buyers cannot operate it, the Spark-native advantage was not really an advantage in the mid-market to begin with.

The Actual Case for Fabric in the Mid-Market

The case for Fabric in the mid-market is not that Fabric is technically superior. It is that Fabric solves the mid-market's actual problem.

1. The pricing is structurally different. An F2 capacity starts at around 262 USD per month. The integration with existing Power BI Premium investment means many mid-market Microsoft customers effectively get Fabric for the cost of their existing Power BI footprint plus a delta. The Databricks comparison at equivalent workload is harder to defend on TCO at this scale, particularly once you include the engineering cost of operating the platform.

2. The integration story compounds. Fabric tenants share the same identity layer as Microsoft 365. The same Entra ID that governs SharePoint, Teams, and Outlook governs Fabric. The same Microsoft Purview labels apply. The same audit trails surface in the same security tools. The same Copilot and agent infrastructure consumes Fabric data through MCP servers. Each of these is a small thing. Together they remove an entire class of integration cost the mid-market cannot absorb.

3. Power BI integration is the killer feature. Direct Lake mode, available only on Fabric, gives Power BI direct access to OneLake data without import or DirectQuery overhead. For BI-heavy mid-market clients, this is not a feature. It is the reason to choose Fabric. Databricks queried from Power BI works. It does not work like this.

4. Skills availability is in Fabric's favour by an order of magnitude. Power BI developers exist everywhere. The skills required to operate Fabric are mostly the skills mid-market companies already have. The skills required to operate Databricks well are scarce and expensive. For a 200-person company hiring data team member number three, this is the deciding factor in most cases we have seen.

5. The 80 percent rule applies. Fabric is good enough for 80 percent of mid-market workloads today and will be good enough for 90 percent within two years. The 10 to 20 percent where Databricks remains genuinely better is real, but it is not where mid-market buyers spend their time.

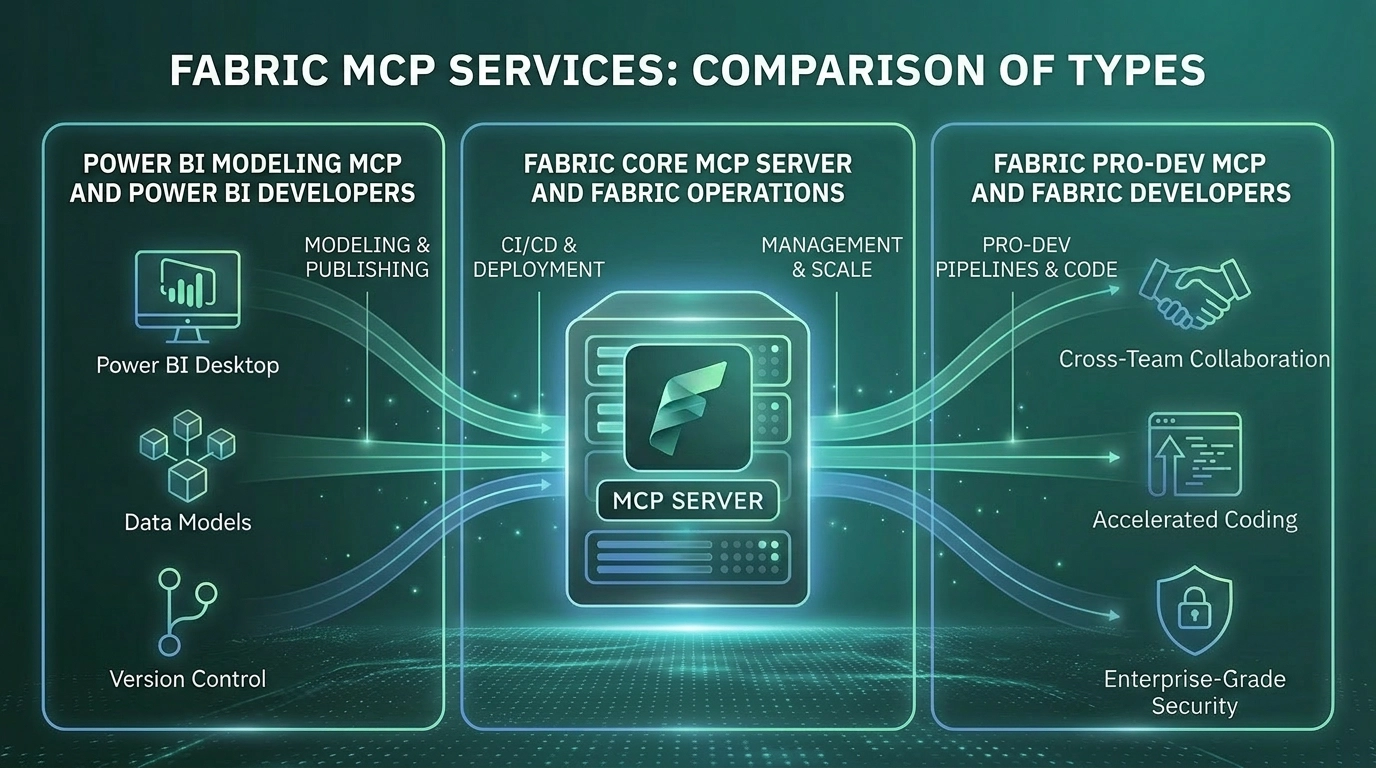

6. The agentic future is more Fabric-aligned. Microsoft Foundry, Copilot Studio, the entire MCP server family covering Fabric Pro-Dev, Core, RTI, and Data Agents: the agentic stack Microsoft is building runs on Fabric natively. Databricks has its own agentic story, and it is competent, but the integration with the Microsoft AI surface that mid-market companies are actually deploying is fundamentally tighter for Fabric.

Where Databricks Still Wins, and Will Keep Winning

This is not an article saying Databricks is dying. It is an article saying Databricks loses the mid-market specifically.

Databricks wins, and will keep winning, in:

- Enterprise-scale data platforms where the data volumes genuinely stress the platform

- Heavy ML and AI workloads where MLflow, the model registry, and the serving infrastructure provide compounding value

- Organisations with material Spark investment and the expertise to extract value from it

- Multi-cloud requirements that are not negotiable

- Performance-critical workloads where Photon's optimisations make a measurable financial difference

- Organisations whose data team has the depth to operate Databricks at the level it rewards

This is a real, defensible, sizeable market. Databricks is not going anywhere in enterprise. The argument is about the mid-market specifically, and about the buyer profile making most of the data platform decisions outside the Fortune 500.

The Three-Year Prediction, With Receipts

Three years is the timeframe because three things compound over that window.

Year one is happening now. Microsoft is shipping the Fabric features that close the gap with Databricks for the workloads mid-market actually runs. Notebooks improved substantially. Mirroring matured. The data engineering surface is now genuinely usable for the volumes the mid-market produces. We see this in our own implementations.

Year two is the integration year. Foundry, the MCP server family, Copilot Studio, Direct Lake at scale, and the bundling story with Microsoft 365 compound through 2027. The reasons to choose Fabric stop being "it is the obvious Microsoft choice" and start being "it is the obvious choice, full stop, for a Microsoft-estate mid-market buyer".

Year three is the consequence year. Mid-market organisations make data platform decisions on five to seven year cycles. The wave of decisions made in 2025 and 2026 toward Fabric will be operational and visible by 2028. The market share data will show what current implementations already show: Fabric is the default in the mid-market, Databricks is the considered exception.

Flatten is the right word. Not eliminate. Databricks does not disappear from the mid-market. It becomes the choice for the 15 to 20 percent of mid-market buyers with specific requirements that genuinely favour it, and it loses the rest.

Why the Databricks Crowd Is in Denial

The denial is not because the Databricks crowd is wrong about Databricks being a good product. They are right about that. The denial is because they are answering an enterprise question when the market is asking a mid-market question.

When a Databricks advocate argues platform maturity, Spark performance, MLflow excellence, or multi-cloud flexibility to a mid-market buyer, they are making technically correct arguments about a context the buyer is not in. The buyer wants to know whether their existing Power BI developers can be productive next quarter, what the all-in cost looks like compared to staying on the current stack, and whether the platform will work with their Microsoft 365 investment without a separate integration project. These are not questions Databricks' marketing answers well.

The other layer of denial is professional. The Databricks ecosystem has a generation of consultants, partners, and product specialists who have built careers on the platform. The position "this is the better platform" is also "my career path is the better career path". The two are difficult to separate.

What This Means for Buyers

For mid-market buyers evaluating the choice in 2026, three observations.

First, the right question is not "which platform is technically better". It is "which platform fits the team, the budget, and the existing estate". For most Microsoft-estate mid-market buyers, Fabric answers this question correctly. For most multi-cloud, ML-heavy, or Spark-fluent mid-market buyers, Databricks does. Match the platform to the actual context.

Second, do not let either vendor argue you out of your own reality. The platform that is right for your organisation is the one that works for your team, your data, and your roadmap. Vendor advocates on either side are not always optimising for your interests.

Third, if you are already on a Microsoft estate with meaningful Power BI investment, the burden of proof is now on Databricks to demonstrate why you should pay the integration cost. That has not been true historically. It is true in 2026. The default has shifted.

Where Solv Sits

At Solv we have implemented Fabric on most engagements, and Databricks where the client context warranted it. We have charged real money for both. We are not a Fabric-only firm by ideology. We are a Fabric-first firm because Fabric is the right answer for the buyer profile we serve most often.

When a prospective client describes a mid-market estate on Microsoft 365 with real Power BI investment, we recommend Fabric. When a prospective client describes a multi-cloud, ML-heavy, Spark-fluent organisation, we recommend Databricks, and we will tell them that. The recommendation reflects the buyer's actual context, not the platform we would prefer to deliver.

This is the position the rest of the consulting market will arrive at over the next three years. We have been there for a while.